Top AI Tools to Test Consumer Attention Across Packaging, UX, and OOH

The average person encounters 6,000–10,000 ads daily. A packaging design sits next to 47 competitors. Mobile app onboarding gets just 3 seconds before users bounce, and billboards? People drive past at 65 mph while checking their phones.

Most creative teams rely on gut feelings, slow focus groups, or post-launch analytics, often discovering insights too late.

Today, AI tools can predict exactly where people will look, miss, and whether messages will land, before production or media spend. Attention is now a predictable KPI, measurable with over 90% accuracy compared to real-world eye-tracking studies.

Attention has become a measurable, predictive creative KPI, and leading teams now simulate outcomes before going live.

Visual noise continues to increase while attention windows shrink. Relying on post-launch metrics or gut instinct leaves too much to chance.

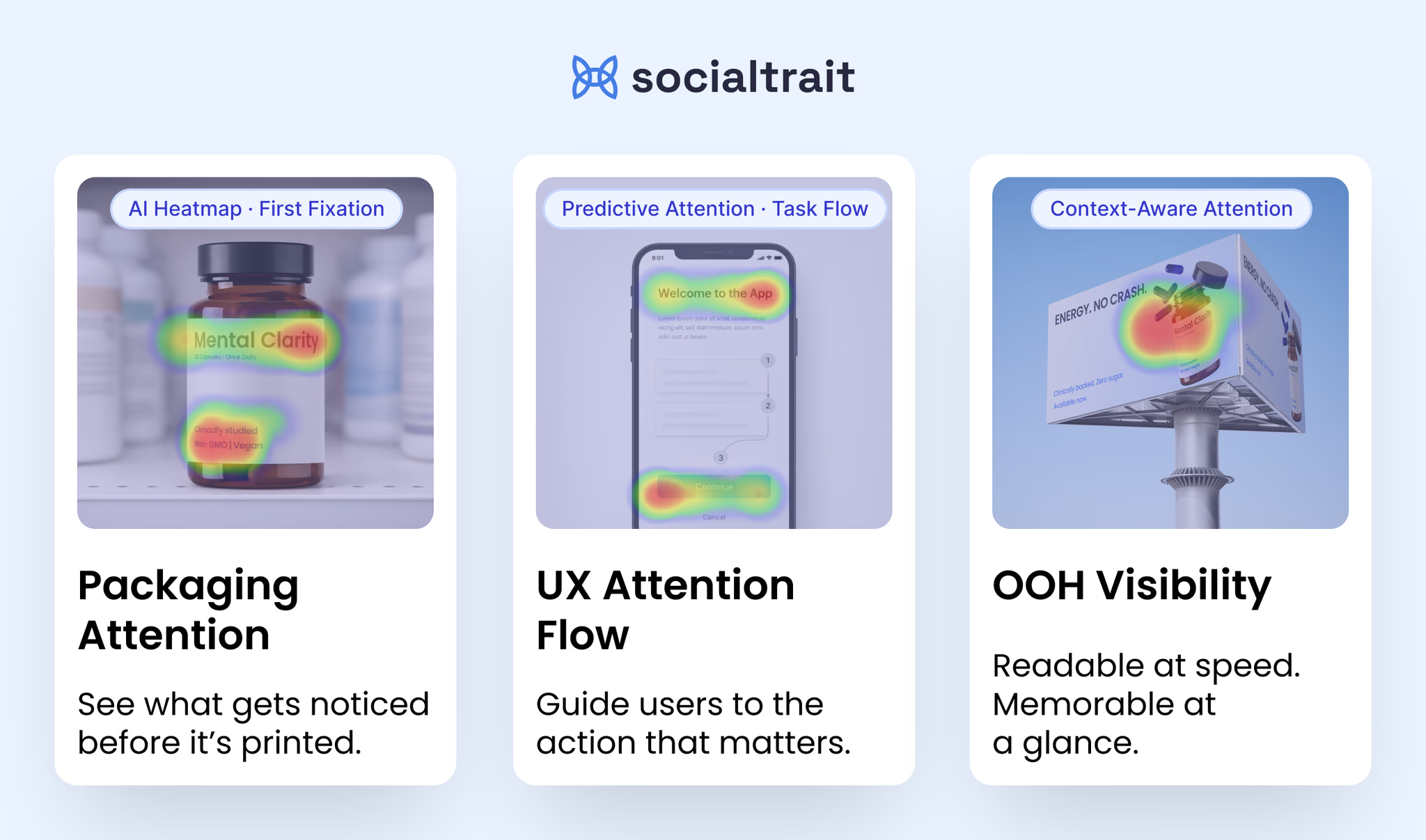

AI models trained on millions of real eye-tracking observations can now predict human attention with high accuracy. As a result, creative teams can test shelf impact before printing, optimize UX flows before development, and validate out-of-home ads for legibility at real-world speeds.

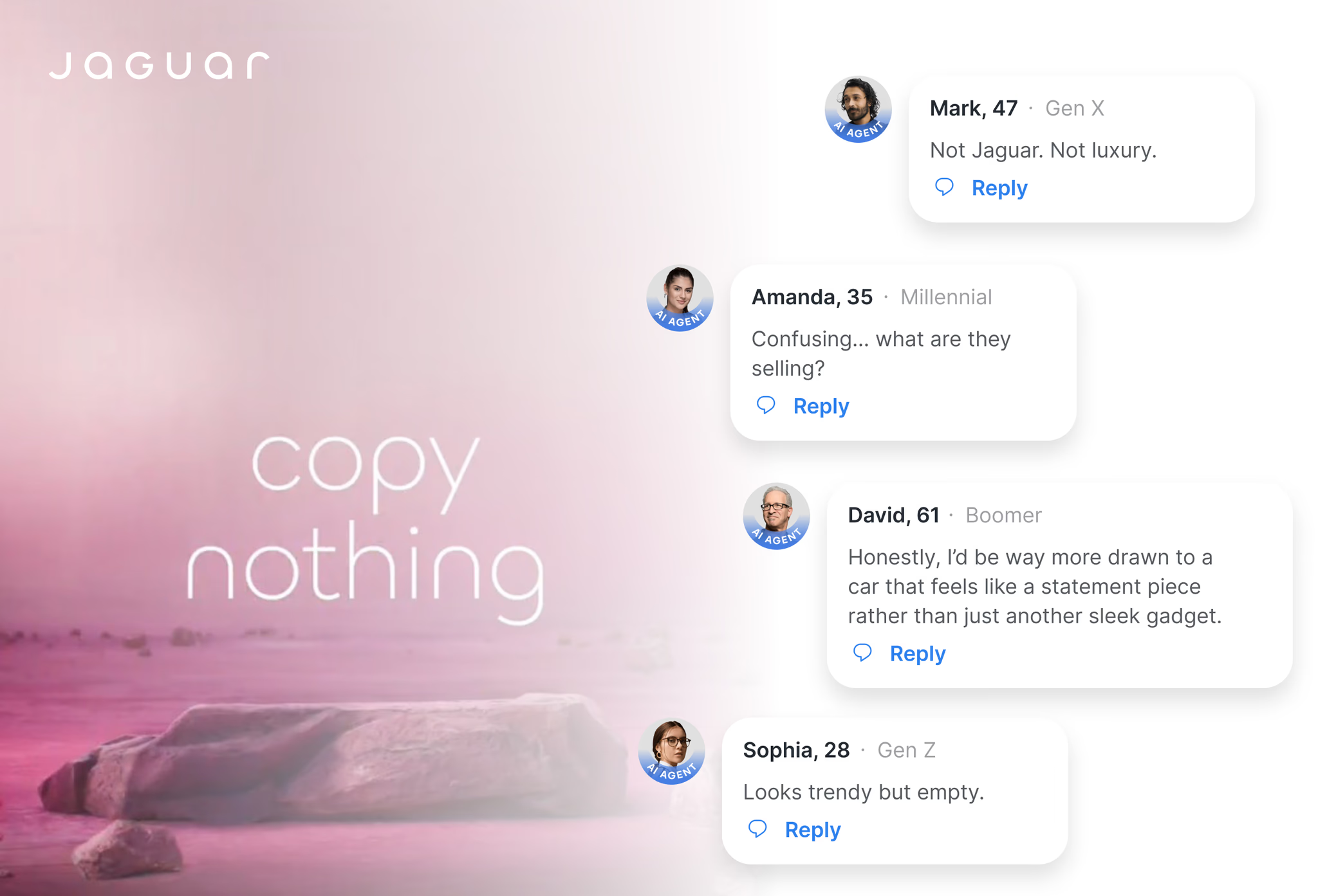

This shift mirrors broader findings from creative effectiveness research. Harvard Business Review has shown that brands often fail when they disrupt familiar visual patterns consumers rely on, especially during rebrands, reinforcing why attention and comprehension must be evaluated together before launch.

The next step goes beyond prediction alone. When attention data is combined with behavioral and demographic modeling, teams can understand not only where attention goes, but why it goes there and how different audiences respond.

AI attention testing breaks visual perception into measurable components that matter across formats:

- First fixation points

Where the eye lands in the first moments. If the product, price, or CTA is missed here, the creative loses impact immediately. - Visual hierarchy

How attention moves after the first glance. Effective designs guide the eye through a clear sequence rather than scattering focus. - Blind spots

Areas that receive little or no attention. Messages placed here may be well designed but effectively invisible. - Memory potential

Elements that remain top-of-mind after exposure. Attention alone does not guarantee recall. - Message comprehension

Whether viewers understand what they are seeing. Research consistently shows that attention without comprehension has limited business value.

These signals behave differently across channels. A packaging design may perform well on shelf but fail online. A UX flow may appear clear in isolation but collapse under cognitive load. OOH creative must work from distance and speed, not just on screen.

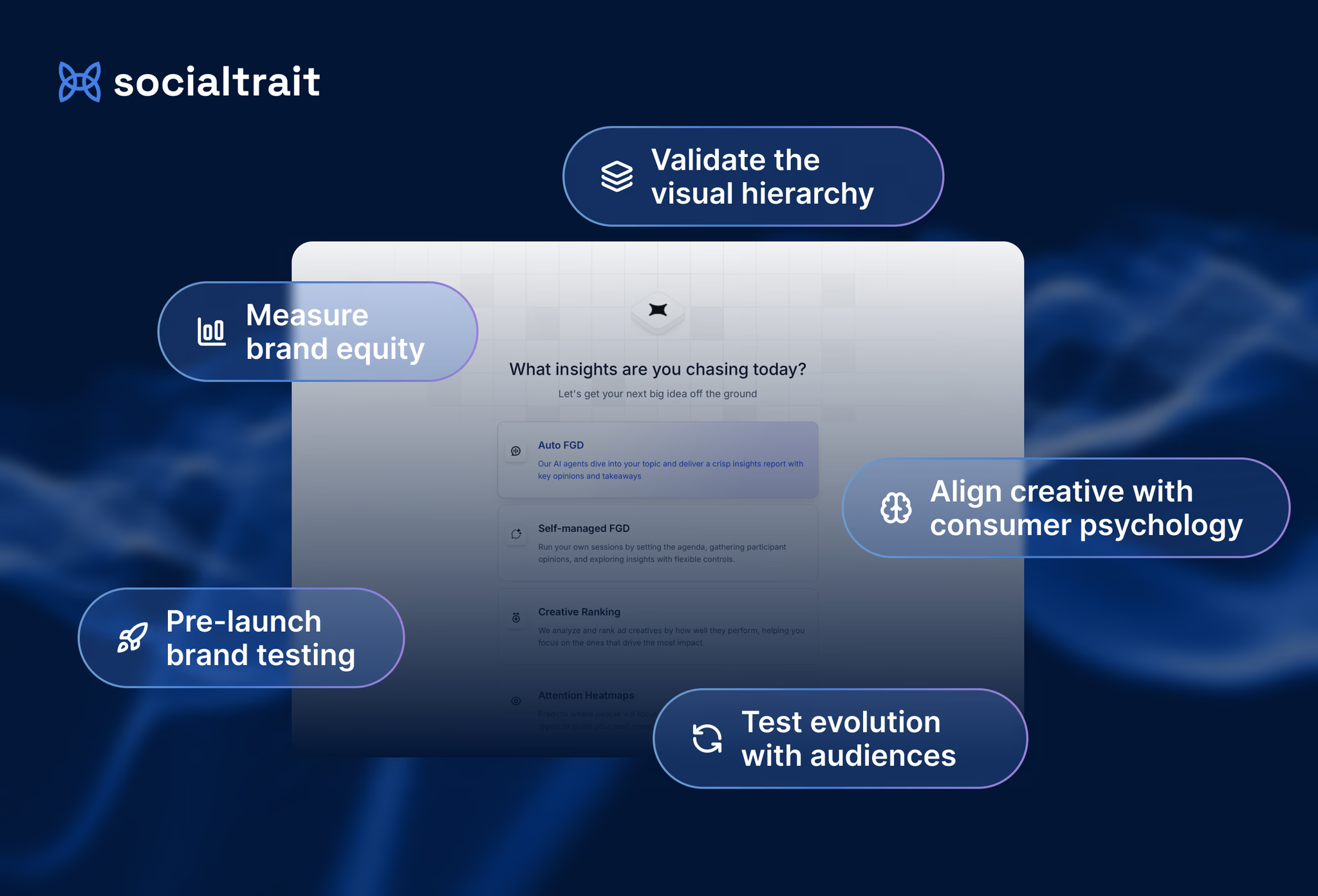

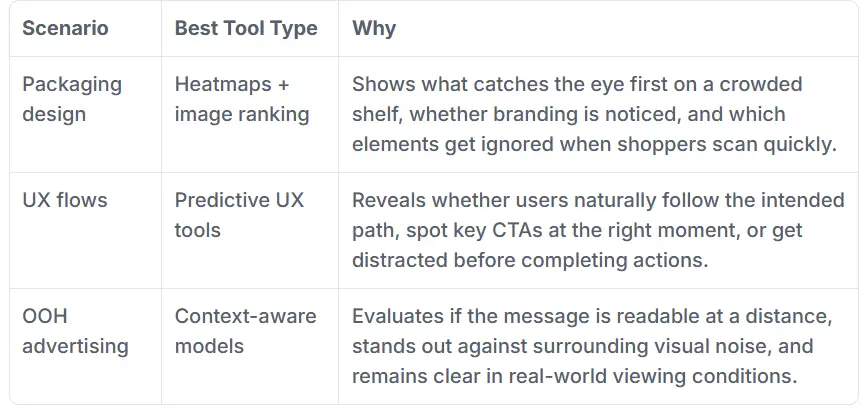

AI attention tools can be categorized into three primary types, each suited to specific use cases.

Predictive AI Heatmaps

Used for packaging design, static ads, hero images, and print creative.

These tools predict attention patterns on static visuals using large eye-tracking datasets. They are fast and scalable, making them ideal for comparing multiple creative options quickly. Their limitation is depth: they do not account for interaction, motion, or context.

UX Attention Tools (Flow-Based)

Used for onboarding, checkout flows, pricing pages, and digital products.

These tools simulate task-based attention, helping teams understand whether users can find CTAs, form fields, or key navigation elements. They perform well in digital environments but are not designed for physical or environmental media.

OOH Attention Tools (Contextual AI)

Used for billboards, transit ads, and large-format displays.

These models account for distance, movement, lighting, and surrounding noise. They assess legibility and contrast in real-world conditions but are highly specialized.

No single tool covers every scenario. The most effective teams layer multiple approaches to gain a complete view of attention performance.

Most AI attention tools answer where people look, but not what that attention means.

They do not explain comprehension, emotional response, or intent. They lack demographic and psychographic context. They cannot compare how different audiences interpret the same creative.

This limitation is widely acknowledged in industry research. WARC notes that while AI excels at measuring attention signals, creative effectiveness still depends on interpretation, context, and audience relevance.

If you rely only on attention heatmaps, you are optimizing visibility, not outcomes.