AI Vulnerability in the US: How Misinformation Is Shaping a Nation's Trust

In a country where newsfeeds move faster than facts, Americans are facing a real crisis of trust. With AI-generated content spreading across every screen and platform, people aren’t just confused, they’re second-guessing everything. What’s real? What’s AI? And who can you believe?

These questions came into sharp focus during the 2024 presidential election, when four out of five Americans said they were worried about AI’s role in spreading misinformation. Additionally, Americans express little confidence in tech companies' ability to prevent AI misuse during elections, highlighting a broader institutional trust gap.

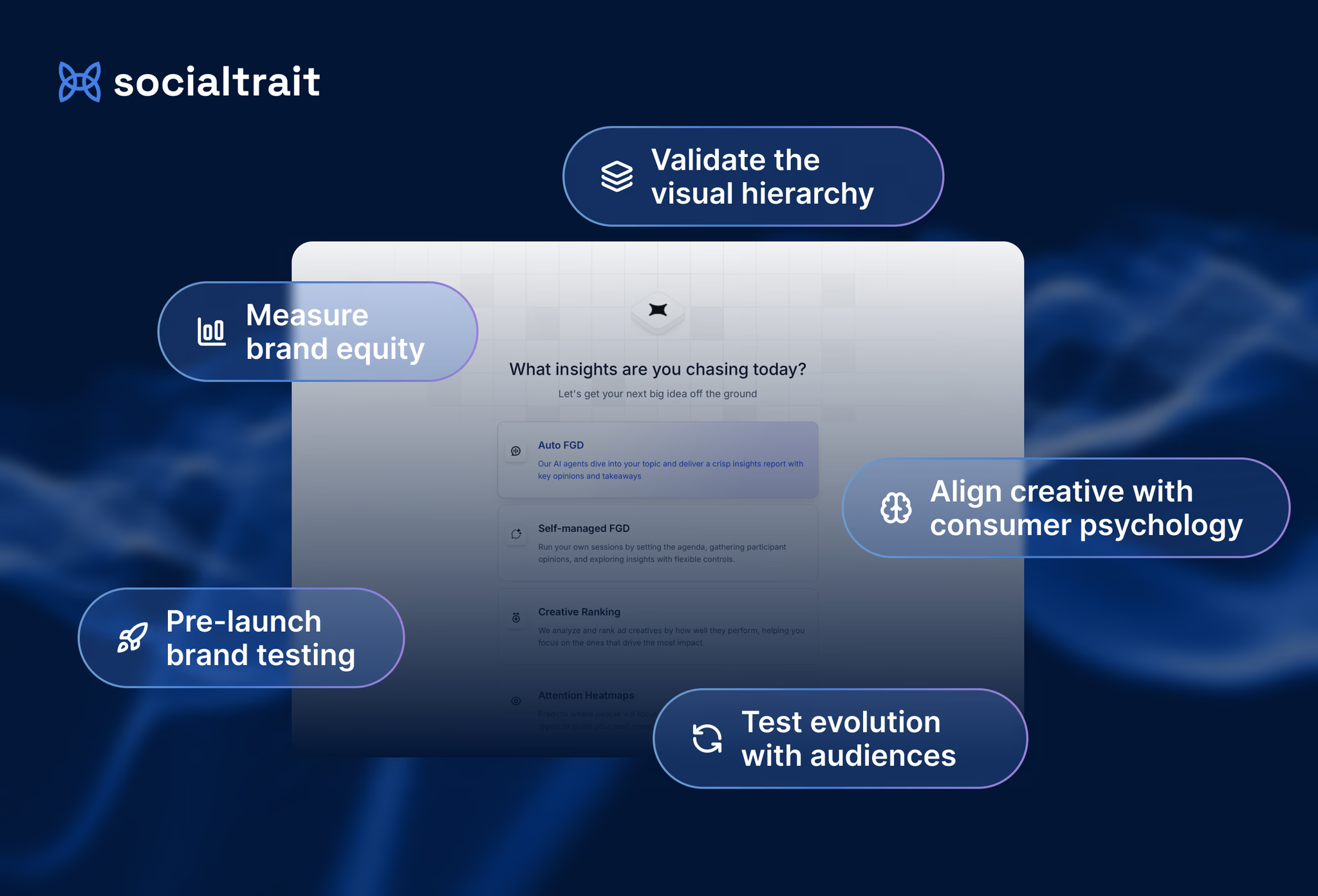

To understand this, Socialtrait conducted a study titled “Evaluating Online Information Trustworthiness” using a mixed-method approach. The research combined a quantitative survey with AI-simulated focus group discussions, all powered by the Socialtrait platform.

The researcher defined the study’s objective and context, and a virtual group of AI Agents each designed to reflect real U.S. demographic, regional, and behavioral traits responded to open-ended questions. This method uncovered deep patterns in how Americans evaluate, share, and trust AI-generated content, across generations and digital environments.

Across every demographic, from Gen Z to Baby Boomers, participants report rising levels of caution. It’s no longer just media-savvy users who second-guess what they see; even non-tech users are growing skeptical.

"Honestly, it's making everyone a bit more paranoid, even my non-techy friends. Biggest worry? It's getting harder to trust *anything* you see online, which makes real conversations harder, you know?" → Millennial

Whether on TikTok or Facebook, skepticism is becoming a default response. It’s no longer a question of who is vulnerable, we all are.

Key Data:

- 97% of respondents believe AI-generated persuasive content should require disclosure. Only 1% find it acceptable without any label.

- 72% say they’re not very likely to believe controversial videos unless they can verify them.

- 81% of people say they sometimes check account authenticity before trusting content, revealing that vigilance is inconsistent.

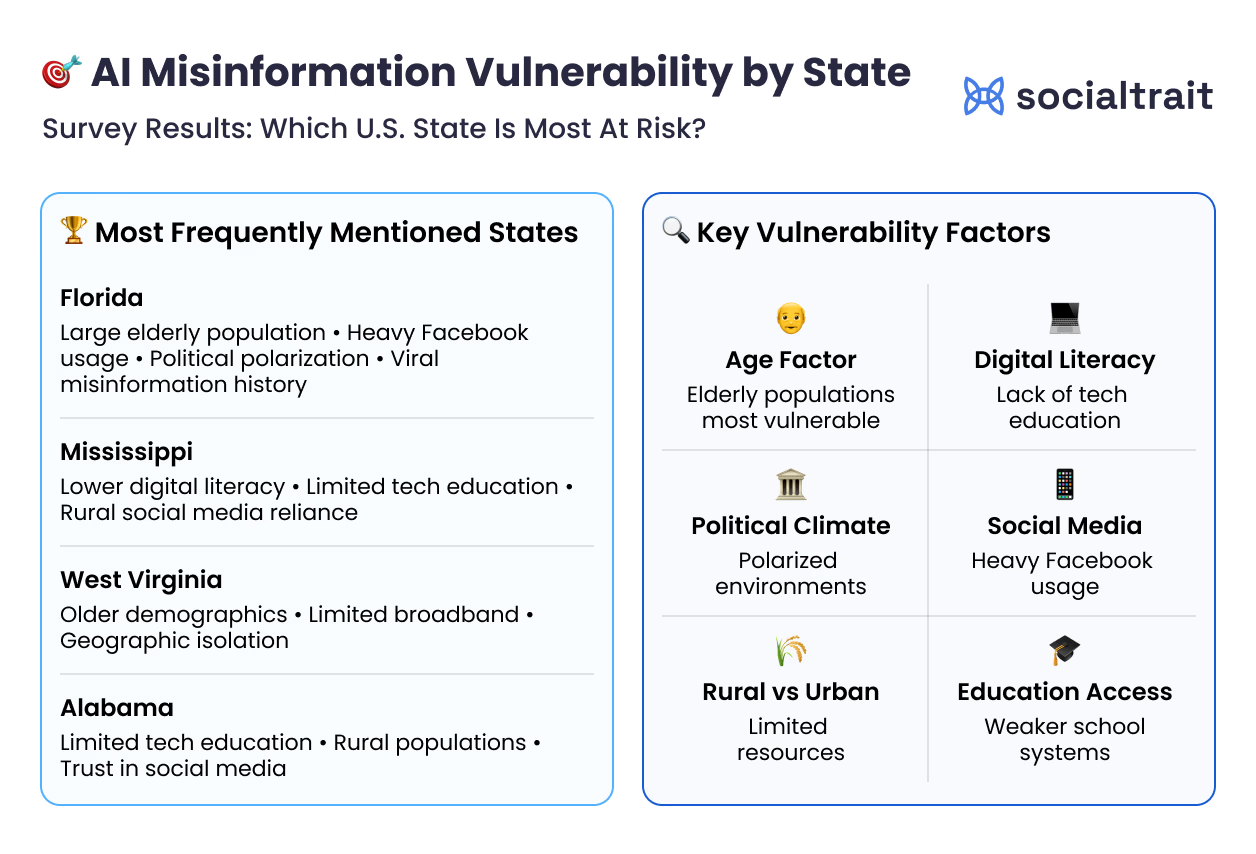

When asked which states are most vulnerable to AI-powered misinformation, these were mentioned the most:

- Florida: Aging population, high Facebook use, political polarization

- Mississippi: Low digital literacy, rural reliance on social media

- West Virginia: Limited broadband, older demographics, geographic isolation

- Alabama: Similar trends to Mississippi; low access to tech education

People believe they can detect AI-generated content. But when tested, even those in tech fields report only moderate accuracy.

"I'm a dev and even I struggle to tell sometimes, maybe 40% accurate on a good day, the models are getting scary good and anyone claiming higher success rates is probably fooling themselves."→ Millennial

This confidence-detection gap is dangerous. It means people are sharing content they think they've vetted but haven't.

Recent research on misinformation susceptibility has shown that younger generations, particularly Generation Z, often overestimate their ability to detect false information despite being highly engaged with digital content.

Generational Detection Confidence:

- Gen Z: 88% somewhat/very confident

- Millennials: 84%

- Gen X: 80%

- Boomers: 63%

Most Americans Feel Unprepared

While confidence runs high, actual preparedness is low. Only 3% of people say they feel fully equipped to navigate AI-generated content. A staggering 83% feel only “somewhat” prepared, and 14% don’t feel prepared at all.

Baby Boomers are the most vulnerable:

- 26% say they feel completely unprepared

- Only 5% say they’re ready

- Boomers are nearly 3x more likely to feel unprepared than Gen Z

People are more skeptical on public platforms but let their guard down in private channels. DMs, group chats, and even Stories become easy entry points for misinformation because trust is transferred from person to content.

"But honestly, sometimes I just DM a friend and ask if it looks real to them crowdsourcing vibes." → Gen Z

In these informal spaces, content often spreads with a disclaimer (“not sure if this is real, but...”), which still reinforces exposure and acceptance.

There is strong demand for platforms to clearly label AI-generated content. Users want to know what’s real, what’s synthetic, and who created it.

"If I could set the rules, I'd want any AI-generated content to be clearly labeled, like, no sneaky fine print, just obvious tags so people know what they're looking at." → Millennial

This isn’t just a Gen Z thing. Millennials, Gen X, and Boomers all express the same need.

AI Fatigue Is Setting In

The more experience people have with AI tools, the more skeptical they become. But that skepticism is exhausting. The scale of this challenge is evident in how Americans consume information daily, spending an average of 3.5 hours on smartphones, much of it engaging with content whose authenticity is increasingly difficult to verify.

"The more I play with these models the less I trust anything online these days ... it's exhausting." → Gen Z

There’s a growing tension between vigilance and burnout. People are tired of constantly checking, verifying, second-guessing. When fatigue sets in, scrutiny drops and misinformation slips through.